Serverless functions are powerful, but the dreaded cold start can significantly impact performance and user experience. At MisuJob, we’ve tackled this challenge head-on to ensure a seamless experience for professionals searching for their next opportunity.

Serverless Cold Starts: Understanding the Beast

Cold starts are inherent to serverless architectures. They occur when a serverless function is invoked after a period of inactivity. The cloud provider needs to allocate resources, initialize the runtime environment, and load the function code before it can handle the request. This initialization phase introduces latency, often noticeable to the end-user.

Several factors contribute to cold start duration:

- Language Runtime: Some runtimes, like Java and .NET, generally have longer cold starts than others, like Node.js or Python.

- Function Size: Larger function packages take longer to download and load.

- Dependencies: Complex dependency trees can increase startup time.

- Memory Allocation: Higher memory allocations can sometimes lead to longer cold starts, depending on the cloud provider’s implementation.

- Cloud Provider: Different cloud providers have varying infrastructure and optimization strategies, leading to performance differences.

At MisuJob, where we processes 1M+ job listings and leverage AI-powered job matching to connect candidates with relevant roles across Europe, even a few hundred milliseconds of cold start latency can accumulate and impact the responsiveness of our platform. We constantly monitor and optimize our serverless functions to mitigate these effects.

Measuring Cold Starts: The Data-Driven Approach

Before attempting to optimize anything, you need to measure it. We use a combination of techniques to accurately track cold start times:

- CloudWatch Logs (AWS): We analyze CloudWatch logs to identify the “Init Duration” metric, which represents the time it takes for the function to initialize.

- Custom Metrics: We inject custom logging statements at the beginning and end of our function handler to capture the total execution time and subtract the “Init Duration” to isolate the cold start overhead.

- Synthetic Monitoring: We use tools to periodically invoke our functions and measure the response time, allowing us to identify cold start trends over time.

Here’s an example of how we inject custom logging in our Node.js functions:

// index.js

const AWS = require('aws-sdk');

exports.handler = async (event) => {

const startTime = new Date().getTime();

console.log('Function execution started.');

// Simulate some work

await new Promise(resolve => setTimeout(resolve, 100));

const endTime = new Date().getTime();

const duration = endTime - startTime;

console.log(`Function execution completed in ${duration}ms`);

return {

statusCode: 200,

body: JSON.stringify({ message: 'Hello from Lambda!' }),

};

};

We then use a CloudWatch Logs Insights query to analyze the logs and extract cold start information:

fields @timestamp, @message

| filter @message like "Function execution started" or @message like "Function execution completed"

| parse @message "Function execution completed in *ms" as duration_ms

| stats min(duration_ms), avg(duration_ms), max(duration_ms) by bin(1m)

By analyzing this data, we can identify functions with high cold start times and prioritize them for optimization.

Mitigation Strategies: Taming the Cold Start Beast

Once you have a clear understanding of your cold start performance, you can implement various mitigation strategies:

1. Provisioned Concurrency

Provisioned Concurrency (AWS Lambda) allows you to pre-initialize a specified number of function instances, eliminating cold starts for those instances. This is an effective strategy for critical functions that require low latency.

We use Provisioned Concurrency for our AI-powered job matching API, ensuring that candidates receive near-instantaneous results. This comes at a cost, as you are paying for idle compute resources, but the improved user experience justifies the expense in our case.

2. Keep-Alive Mechanisms

Implement keep-alive mechanisms to keep your functions warm. This involves periodically invoking your functions to prevent them from becoming idle and incurring a cold start.

You can use a CloudWatch Event Rule (or similar functionality in other cloud providers) to trigger your function every few minutes. This approach is less expensive than Provisioned Concurrency but may not completely eliminate cold starts. The frequency of invocation needs to be tuned to match the typical idle timeout of your cloud provider.

3. Optimize Function Size and Dependencies

Reduce the size of your function package by removing unnecessary dependencies and optimizing your code. Use tools like webpack or esbuild to bundle your code and minimize the package size.

We found significant improvements by removing unused libraries from our function packages. For example, one function had a large image processing library that was only used in a small number of cases. By refactoring the code to load the library lazily, only when needed, we reduced the function package size by 40% and decreased the cold start time by 25%.

4. Choose the Right Runtime

Consider the trade-offs between different runtimes. While Java and .NET may offer performance advantages for certain workloads, they often have longer cold start times than Node.js or Python.

For latency-sensitive functions, we often prefer Node.js or Python due to their faster startup times. However, for computationally intensive tasks, we may opt for Java or .NET, carefully balancing the cold start overhead with the overall performance gains.

5. Optimize Code Initialization

Avoid performing expensive initialization tasks during the function’s global scope. Instead, perform these tasks lazily, only when they are needed.

For example, if your function connects to a database, initialize the connection within the function handler rather than in the global scope. This ensures that the connection is only established when the function is actually invoked.

6. Container Image Functions

Packaging your functions as container images can sometimes improve cold start times, especially if you have complex dependencies or require specific operating system configurations. Container images provide a consistent and reproducible environment, reducing the overhead associated with initializing the function’s runtime.

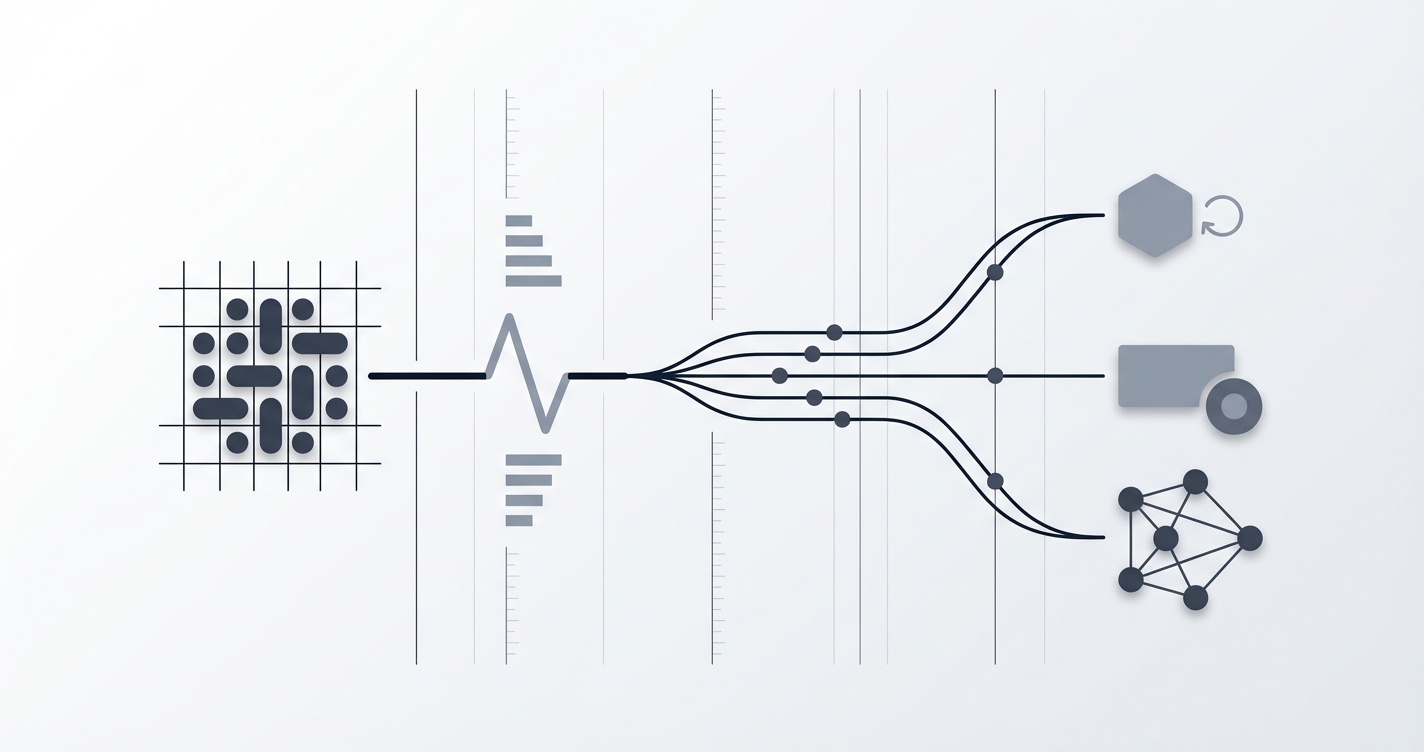

Architectural Patterns: Designing for Cold Start Resilience

In addition to the mitigation strategies described above, you can also design your architecture to be more resilient to cold starts:

1. Asynchronous Processing

For tasks that don’t require immediate responses, consider using asynchronous processing. This allows you to decouple the request from the execution, minimizing the impact of cold starts on the user experience.

For example, when a user uploads their CV to MisuJob, we use an event-driven architecture to process the document asynchronously. The user receives immediate feedback that the upload was successful, while the actual processing happens in the background.

2. Caching

Implement caching to store frequently accessed data and reduce the need to invoke functions for every request. This can significantly improve performance and reduce the impact of cold starts.

We use a combination of in-memory caching (within the function itself) and external caching services (like Redis) to store job search results and user preferences.

3. API Gateway Optimization

Configure your API Gateway to optimize for latency. This includes enabling caching, using compression, and minimizing the number of hops between the client and the function.

We use API Gateway caching to store responses to common API requests, reducing the load on our backend functions and improving the overall responsiveness of our platform.

Real-World Examples: MisuJob’s Cold Start Journey

We’ve applied these techniques across our platform, resulting in significant improvements in cold start performance. Here are a few examples:

- Job Search API: By implementing Provisioned Concurrency for our core job search API, we reduced the average cold start time from 500ms to under 50ms, resulting in a much more responsive user experience.

- CV Parsing Service: By optimizing the function package size and lazy-loading dependencies for our CV parsing service, we reduced the cold start time by 30%.

- Recommendation Engine: We transitioned our recommendation engine to use container image functions, which improved the consistency of the runtime environment and reduced the cold start time by 20%.

Salary Implications for Serverless Engineers in Europe

The ability to optimize serverless architectures for performance, including minimizing cold starts, is a highly valued skill. Here’s a comparison of estimated average salaries for serverless engineers across different European countries (in EUR):

| Country/Region | Average Salary (EUR) |

|---|---|

| Germany (DACH) | 75,000 - 95,000 |

| United Kingdom | 65,000 - 85,000 |

| Netherlands | 70,000 - 90,000 |

| Nordics (Sweden, Norway, Denmark) | 72,000 - 92,000 |

| France | 60,000 - 80,000 |

These figures are estimates and can vary based on experience, specific skills, and company size. Mastering serverless technologies and demonstrating expertise in areas like cold start optimization can significantly increase your earning potential.

Conclusion: Embracing the Cold Start Challenge

Cold starts are a reality of serverless computing, but they don’t have to be a major obstacle. By understanding the underlying causes, measuring the impact, and implementing appropriate mitigation strategies, you can build serverless applications that are performant, scalable, and cost-effective. At MisuJob, we’re committed to continuously improving our serverless architecture to provide the best possible experience for job seekers across Europe.

Key Takeaways

- Cold starts are a common challenge in serverless architectures.

- Measure cold start times to identify areas for improvement.

- Use Provisioned Concurrency or keep-alive mechanisms for critical functions.

- Optimize function size and dependencies.

- Choose the right runtime for your workload.

- Design your architecture to be resilient to cold starts.

- Skills in optimizing serverless architectures are highly valued in the European job market.