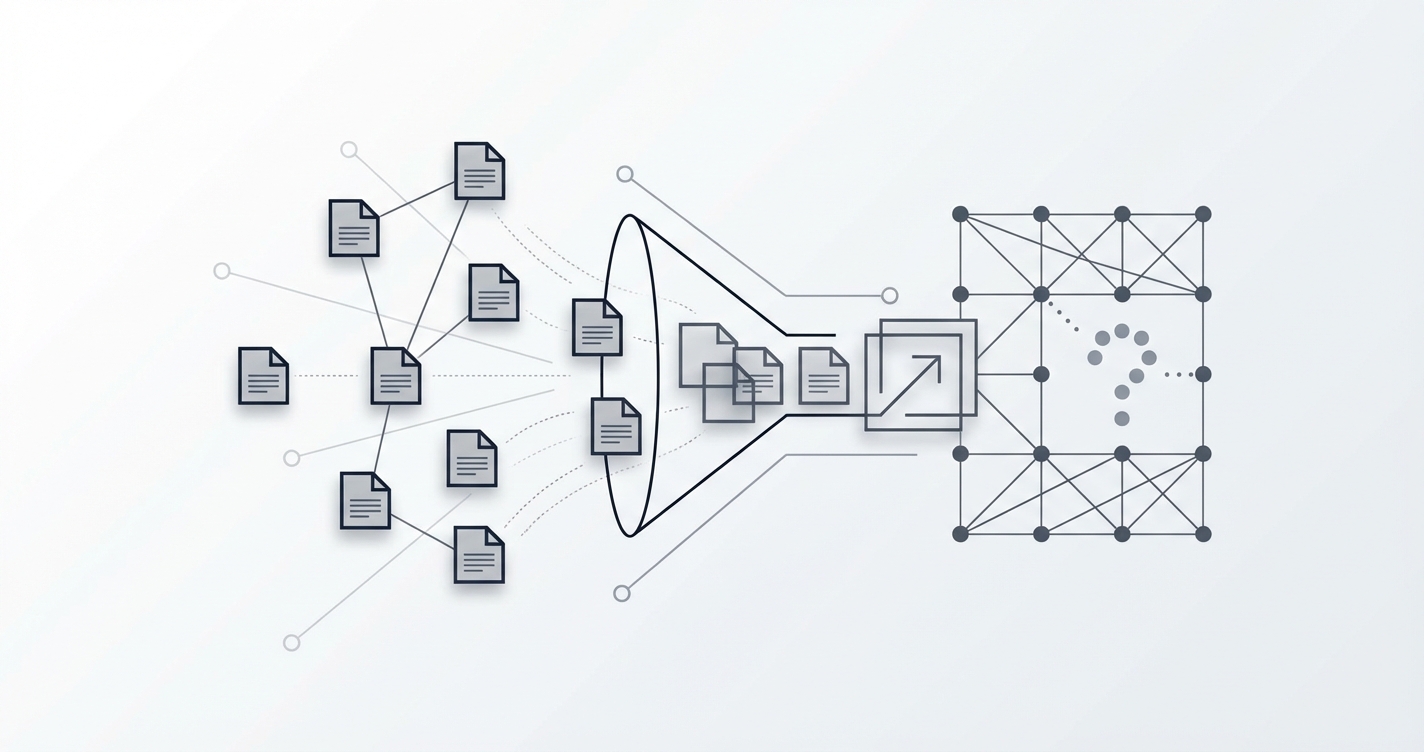

“Senior React Developer” at Spotify. Posted on one ATS. Syndicated to LinkedIn. Aggregated on 3 job boards. Imported by 5 platforms. That’s the same job appearing 10+ times in your database.

Deduplicating job listings is harder than you think. Here’s our multi-layer approach that catches 95% of duplicates — and why 100% is probably impossible.

Why Simple Matching Fails

-- This misses most duplicates

WHERE lower(title) = lower($1) AND lower(company) = lower($2)

Company name variations:

- “Deutsche Telekom” = “T-Systems” = “Telekom Deutschland”

- “Alphabet” = “Google” = “Google LLC” = “Google Ireland”

Title variations:

- “Sr. React Dev” = “Senior React Developer” = “React.js Engineer (Senior)”

- “SWE III” = “Software Engineer 3” = “Senior Software Engineer”

Same job, different IDs:

- Company archives and reposts with identical content but a new URL

Our Multi-Layer Approach

Layer 1: URL Match (100% precision, low recall)

const urlMatch = await pool.query(

'SELECT id FROM jobs WHERE url = $1 AND is_active = true',

[job.url]

);

If the exact URL exists, it’s definitely a duplicate. But the same job on different sources has different URLs, so this only catches republished jobs.

Layer 2: Title + Company (high precision, medium recall)

const contentMatch = await pool.query(`

SELECT id FROM jobs

WHERE lower(title) = lower($1)

AND lower(company) = lower($2)

AND is_active = true

`, [job.title, job.company]);

Catches identical postings across sources. Misses title and company name variations.

Layer 3: Content Hash (medium precision, catches reposts)

const descHash = crypto

.createHash('md5')

.update(job.description.substring(0, 500).toLowerCase())

.digest('hex');

const hashMatch = await pool.query(

'SELECT id FROM jobs WHERE content_hash = $1 AND is_active = true',

[descHash]

);

Catches reposted jobs with different titles/URLs but identical descriptions. Using only the first 500 chars avoids false negatives from minor footer changes.

Performance: The Index That Matters

Without this index, Layer 2 does a full table scan on 1M rows:

CREATE INDEX idx_jobs_content_dedup

ON jobs (lower(title), lower(company))

WHERE is_active = true;

Dedup checks went from 2+ seconds to < 10ms each. At 10,000 new jobs/day, that’s the difference between 5.5 hours and 1.7 minutes of dedup time.

What We Still Can’t Catch

- “Google” vs “Alphabet” (parent company mapping would help, but maintaining it is a full-time job)

- “Senior Engineer” vs “Staff Engineer” for the same role

- Jobs reposted with AI-rewritten descriptions (increasingly common)

95% accuracy is where we’ve landed. The remaining 5% would require NLP-based semantic matching, which isn’t worth the complexity at our scale.

This runs at MisuJob, keeping 1M+ listings clean across 50+ sources.

How do you handle dedup in your data pipeline? We’d love to hear alternative approaches.